Intro

When I start writing a blog article, I don’t exactly know what to expect. Though… this is basically true in everyday conversation with me, as well. It’s hard to know what words are about to fly out of my mouth. It’s scary for everyone involved. That said… I expect this article to be kind of… epic. Equal parts technical, totally made up, and totally useful ![]()

As mentioned in this previous post on performance gotchas, … well, many things… but mostly that Power Pivot performance issues are all about memory. And that I loves me some Kasper’s Magic VBA Script that tells you how much memory each column of your model is using.

I was recently spending time trying to better understand the impact of various data formats, sort orders, columns that were correlated with another, columns that were ordered vs random, etc… and relying heavily on Kasper’s script. But the sizes I was seeing reported out of the script were often making no sense to me.

That left me cruising the internets for any information I could gather on the magic under the hood for how xVelocity/Vertipaq/whatever-you-call-it really works to store data. It is mostly secret-sauce so information is a bit light, but… I will try to describe what I think I found out ![]()

To be clear and transparent: I don’t know any of this to be totally correct. I don’t work for Microsoft… and if I did… I really couldn’t accurately describe the details here. That’s kind of what is meant by “secret”, you big silly. ![]() But, I think this is… quite close. And certainly close enough to be useful.

But, I think this is… quite close. And certainly close enough to be useful.

The Hash Table

This was my first “Well… Duh… of course they do that” moment. I saw it referred to as a hash table. Rather than storing big values (especially long strings) potentially many times in the column… they instead stick it into a table to store the value once and have a much shorter key to use in place of the long value when building the column data.

the column… they instead stick it into a table to store the value once and have a much shorter key to use in place of the long value when building the column data.

How much shorter? Well, as you can see in my cute table at right, 8 unique names can be stored in just 3 bits per entry. Ignoring null termination those strings are averaging 5 bytes a piece, so I am going to go with “quite a bit shorter” (more than 10x).

And we can actually infer quite a bit from this knowledge. Maybe most importantly… “more unique values takes more memory”, and not just because you have more rows. Your hash table gets bigger, too, and you need more bits to represent the increased number of unique values.

We can also infer that this would work well for all data types… not just strings. Datetime typically takes 8 bytes. Same with Doubles. Even a small int would be 2 bytes, which depending on the number of unique values could net a nice savings.

But, what if the hash table… doesn’t help?

Value Encoding

Pretend you have a bunch of integer values all “clustered” closely together. Say { 10010, 10011, 10012, 10013, 10015, 10016, 10017 }. Now, we could go build our hash table and with only 7 values we would only need 3 bits each… cool. But what if our column only uses each value… once? Was it worth the overhead of the hash table?

In this case… I could just subtract 10010 from each value… leaving { 0, 1, 2, 3, 5, 6, 7 }… without even needing a hash table. From what I understand, vertipaq indeed does something like this, which… kind of blows my mind. It just feels like it would be hard to programmatically figure out the best way to “math the numbers” to make this work. Really awesome.

Anyway, in the case of value encoding there is no hash table, just some magic to transform data from smaller values in the column store, back to their original value. Very fast, but only works with tight ranges of numbers. For data that is more “spread out”, the hash table would work better.

Run-Length Encoding

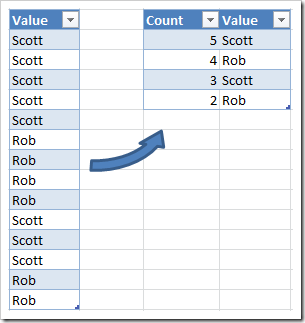

When I think of being able to compress a column of data… this is much more the type of  thing I would think of. You can read about RLE on Wikipedia, but I think even a glance at the diagram to the left gives you the basic idea. Instead of storing the value “Scott” 5 times, you just store a count (5) and the value “Scott”.

thing I would think of. You can read about RLE on Wikipedia, but I think even a glance at the diagram to the left gives you the basic idea. Instead of storing the value “Scott” 5 times, you just store a count (5) and the value “Scott”.

Well, technically you are probably storing the hashed value (that are just a handful of bits) instead of “Scott”, but that doesn’t really impact us here… the two techniques seem relatively independent.

And again, I am sure there is detection for “Hey, this data doesn’t have long runs of repeats and RLE isn’t helping…” and it just doesn’t bother. And maybe they pick and choose between several compression formats, not just RLE. The point is… compression, wahoo!

The practical inference we can make here is that (not surprisingly) if your data has long runs of the same value… it will compress better. Of course, that can depend on your sort order…

Sort Order

In theory, this is not something you need to worry about. And, even in practice, it is probably something you should not worry about … because it is hard to impact the engine here, and if you do… future product changes might rollback your hard work anyway.

That said, in my experimentation, choosing which column to sort first can have an impact on the amount of memory used. From what I read, the engine grabs the first million rows of data, inspects them, and decides for itself what order the columns should be sorted. So, it would seem we can lightly influence their heuristics by adjusting the sort order before it gets a chance to look.

Let’s briefly discuss why the sort order even matters. While we know that data is stored in columns, we have to realize that we also need to be able to pull out the whole row. I think fancy people call that “materialization” or something.

If I grab the sum of revenue for district=9… I obviously need to filter by the district column, but also need to know which row it gets filtered to… to grab the correlated value from the revenue column.

The implication is that… I can’t simply sort all the rows in every column! That’s only going to fly  for the first column. To maintain correct row order, I can only “sub-sort” the second column… for each of the distinct values in the first column.

for the first column. To maintain correct row order, I can only “sub-sort” the second column… for each of the distinct values in the first column.

See the table to the right. It is the same data… first sorted by Last name, and in the second pair, I sort by First name. There are the same number of distinct values in each column… however whichever column I sort first is going to compress better. But with either one, I can rebuild the full first and last name row.

Of course, if I had a 3rd column it would sort even worse. And so on. Giving rise to the oft repeated “lots of columns are very problematic for Power Pivot, especially in large fact tables”.

If my understanding is correct (quite a leap of faith really ![]() ), there are some other implications. For example, if data is “highly correlated” it will also compress better. If everybody with the last name “Smith” happened to be called “Ben”… those Ben’s will be perfectly sorted and highly compressed even though they are in the secondary column. I have not tested this, so don’t hold me to it… but it does make some sense, no?

), there are some other implications. For example, if data is “highly correlated” it will also compress better. If everybody with the last name “Smith” happened to be called “Ben”… those Ben’s will be perfectly sorted and highly compressed even though they are in the secondary column. I have not tested this, so don’t hold me to it… but it does make some sense, no?

Conclusion and Resources

I wish I had better kept track of where I learned most of the stuff here… so I could defer any blame on wrongness to the original source ![]() That said, I am sure some of it was gathered from the great talks at http://www.sqlbi.com/tv. Marco, when you see this… if you want to point at a specific video, please comment.

That said, I am sure some of it was gathered from the great talks at http://www.sqlbi.com/tv. Marco, when you see this… if you want to point at a specific video, please comment.

I also want to say some of it came from this document Performance Tuning of Tabular Models in SQL Server 2012 Analysis Services

Sadly, I did NOT watch this talk from TechEd 2012 before writing this blog entry… it looks like it would have been perfect! I only came across it while searching around to remember where I got my crazy ideas!

Lastly, in the intro I said “the sizes I was seeing reported out of the script were often making no sense to me.” And that is because, Kasper’s script is only keeping track of the size of the hash tables. It is not reporting any usage from Value Encoded columns, and not including the actual column data for hash encoded columns (just the hash table size). So, I have put some effort into updating the script and will post that next time!

- The streak is alive! – August 10, 2017

- DAX KEEPFILTERS Plus Bonus Complaining! – July 20, 2017

- VAR: The best thing to happen to DAX since CALCULATE() – July 11, 2017

- Review: Analyzing Data with Power BI and Power Pivot for Excel – June 20, 2017

- Power BI Date Table – June 2, 2017

Scott Senkeresty here… Founder and Owner of Tiny Lizard. Yes, I have done some stuff: A Master’s Degree in Computer Science from California Polytechnic State University in beautiful San Luis Obispo, California. Over twelve years developing software for Microsoft, across Office, Windows and Anti-Malware teams… Read More >>

I think that the video that better cover this topic is xVelocity Under the Hood: http://www.sqlbi.com/tv/xvelocity-under-the-hood/

Truly epic blog post! Thanks so much.

Thanks Scott. Looking forward to the updated VBA workbook.

Great article Scott!

Really good post. Based on y’all’s experience/ insights, is the size of the file a good measure of the performance of the PowerPivot? I ask because I have a datafile with 7m rows. Since the file contains several amount fields, I split the amount columns into 5 new columns – each column containing the 10,000th place value, 1, 000th, etc. To reduce the number of columns in the data model, I pivoted the dataset and assigned each amt type an id. So, I now have nearly 28m rows after I excluded the zero amount rows.

I loaded the new file and the file size didn’t change much compared to the unpivoted version. In my testing, I replaced all string columns with Int keys and the unique values of the dimensions keys is less than 100.

Before I release the model to the the masses, I don’t know how to benchmark whether the model is going to perform.

I have yet to really figure out how Excel decided to give back memory, it is a bit weird. So probably not a *great* measure, but better than nothing.

A few thoughts:

* check this post: http://tinylizard.com/script-update-what-is-eating-up-my-memory-in-power-pivot/ the script (especially a before changes and after) would tell you a lot.

* I know marco and alberto did some testing on splitting amount columns, and how many was reasonable. After 3 columns there was pretty strong diminishing returns. I don’t know that 5 will HURT you, but I never go past a “simple split”

* If you are doing simple math (sum of the amount), it will still be super fast. Filtering in a star schema, will also be lovely. If you end up doing some double hop lookups (snowflake) and especially if those look ups are on the bigger side… I won’t be surprised by perf issues.

* Tiny Lizard Rule #1: Don’t solve problems you don’t have. You have already gone to great lengths to optimize this model before you even… used it. Madness! Go enjoy your data.