The point of this post is not really calculating Person-Hours. I mean, we will show some measures on that, and if you got here by searching for that, and I helped you… ROCK ON! I’m happy to help.

But… I kind of thing there is value in just… talking about any old measure. Getting some reps in. Getting’ your learn on! Though, in this case, it will have a performance slant to the discussion, which is becoming a theme on this blog.

“People need to be reminded more often than they need to be instructed.”

– Samuel Johnson

A Simple Person-Hours Model:

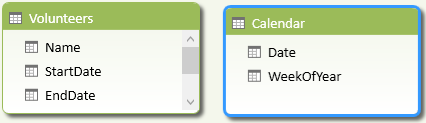

Okay, models don’t get a lot simpler than this and still have the luxury of being a called a model. We have two tables, and they aren’t even related.

Why? Well, I suppose we could try to link either StartDate or EndDate on Calendar[Date], but typically I don’t really want to filter out Volunteers that started or ended on a particular day.

Rather, we are interested in saying “for any given period of time, how many people were working?” 3 people working 1 day is 3 Person-Days. 1 person working 3 days… still 3 person days. It’s kind of like a Man-Hour (or Man-Month)… but less… well… sexist. ![]()

First Measure: via Calendar

I think this measure feels a bit more intuitive/natural and is probably what I would naively write first.

=SUMX (

Calendar,

CALCULATE (

COUNTROWS ( Volunteers ),

FILTER (

Volunteers,

Calendar[Date] >= Volunteers[StartDate] &&

Calendar[Date] <= Volunteers[EndDate]

)

)

)

Hopefully that doesn’t look too intimidating. This just says, “walk every day in the calendar, and for each day, count how many rows are active in the Volunteers table, assuming we filter to just those Volunteers that are active on that day”.

I have to admit, when this problem first came to me, the COUNTROWS was a DISTINCTCOUNT on the Name (errrr, well, probably Id) column. For the life of me, I can’t remember why – I suspect it was bad data (one person was in two places at once?), and it easier to use a DISTINCTCOUNT than to clean up the data. Anyway, THAT version was brutal on performance!

Anyway, if we are just straight mathin’ here … we are talking about { Rows in Calendar in Current Context } * { Rows in Volunteer } total iterations. (Regardless of COUNTROWS vs DISTINCTCOUNT). If you think about the grand total cell (where you are going to look at the most rows), the outer loop, you are getting no interesting optimization/filtering there, you gotta walk every day in the table… I really hope you trimmed your calendar table.

The inner loop is the FILTER( ) over the Volunteers table. While I could imagine some optimization there (quickly filtering out volunteers based on dates), I am skeptical. I mean, you better hope you don’t have a ton of unique start/end dates, but it doesn’t help that I am filtering by two columns. Generally a no-no for perf.

For the DISTINCTCOUNT version, each row in the Calendar table has to build out some memory store those distinct values… and keep track of that while walking each row in the Volunteers table. Generally, a DISTINCTCOUNT is not something to take lightly.

Second Measure: via Volunteers

Before we dig into another way to look at this measure, let me let you in on a Tiny Lizard secret. Don’t solve problems you don’t have. One of the nice things about Power Pivot is that it is very forgiving. It is so stinkin’ fast that you can happily write measures without really thinking about performance. Most problems boil down to the these performance gotchas. However, sometimes you have to put on your big boy pants… and this was one of those times.

SUMX (

FILTER (

Volunteers,

Volunteers[StartDate] <= MAX ( Calendar[Date] )

&& Volunteers[EndDate] >= MIN ( Calendar[Date] )

),

INT ( 1

+ IF (

MAX ( Calendar[Date] ) < Volunteers[EndDate],

MAX ( Calendar[Date] ),

Volunteers[EndDate]

)

– IF (

MIN ( Calendar[Date] ) > Volunteers[StartDate],

MIN ( Calendar[Date] ),

Volunteers[StartDate]

)

)

)

Oh, Simmer Down. It’s not that bad. What you would LIKE to write is a simple…

and if you only care about the final grand total GO NUTS! ![]() “For each volunteer, how many days did they work?”.

“For each volunteer, how many days did they work?”.

But likely you are going to want to drop some Calendar rows into your pivot and see this value change over time… so you need to do a bit more work (it’s not like we have any relationships to help us).

The first param to the SUMX uses a FILTER() that is more or less an optimization. “Only look at volunteers that were active at some point in this date window”. It’s not totally “just an optimization” because if you left it out… you will end up SUM’ing values for Volunteers totally outside this Calendar window, and that’s bad. Trust me, I know first hand.

Then the ugly IF() statements are to protect against a calendar window ending in the middle of a volunteer being active. (and similar for starting). So, if the cell in our pivot table is only looking at April, and this Volunteer worked from Apr 15 to May 15… we don’t want to count any days in May for this cell. That is all those IF()’s are for.

While this measure obviously looks more complicated, it is considerably faster. Where before we had roughly { Calendar Rows In Context } * { Volunteer Rows } iterations… here we have something smaller… { Volunteer Rows That are Active In Context }. There is some extra processing to figure out which are “Active”, but you don’t get that nasty multiplicative effect… since we are never walking all the rows in the Calendar.

Wrap Up

Could this be faster? I would be SHOCKED if it couldn’t be optimized further. The Ninja’s would be pulling out CALCULATETABLE instead of FILTER, and throwing around SUMMARIZE and ADDCOLUMNS and other fancy tricks. But that wasn’t really my point.

Other than just raw value of reading somebody else’s measures as a stepping stone to writing your own, your main take away is that in the rare cases that you do have a performance issues, and you have already walked “the gotchas” list… your next step is to think about the problem in a completely different way.

In our example, instead of thinking “for each day … how many people are working?” … we just flipped that around to “for each volunteer, how many days did they work?”… and we were able to get the performance into the “good enough” range without much effort.

Which is the way Tiny Lizard likes it.

- The streak is alive! – August 10, 2017

- DAX KEEPFILTERS Plus Bonus Complaining! – July 20, 2017

- VAR: The best thing to happen to DAX since CALCULATE() – July 11, 2017

- Review: Analyzing Data with Power BI and Power Pivot for Excel – June 20, 2017

- Power BI Date Table – June 2, 2017

Scott Senkeresty here… Founder and Owner of Tiny Lizard. Yes, I have done some stuff: A Master’s Degree in Computer Science from California Polytechnic State University in beautiful San Luis Obispo, California. Over twelve years developing software for Microsoft, across Office, Windows and Anti-Malware teams… Read More >>

This looks very much like the ‘events-in-progress’ problem I’ve blogged about several times:

http://cwebbbi.wordpress.com/2010/01/07/solving-the-%E2%80%98events-in-progress%E2%80%99-problem-in-dax/

http://cwebbbi.wordpress.com/2011/11/10/solving-the-events-in-progress-problem-in-dax-v2-0/

http://cwebbbi.wordpress.com/2013/06/13/a-new-events-in-progress-dax-pattern/

The second post shows my favourite way of solving the problem, but the third post (and Alberto’s work on optimising it even further) is where things start to get fun 🙂

Thanks Chris! I remember seeing that madness, but couldn’t remember what terms to search for 🙂